Accidentally Discovering Computer Graphics … in a Galaxy Far Far Away.

In 2001 I was hired, out of university, as an animator at LucasArts (RIP).

My job? Fly spaceships for Star Wars Episode II game cutscenes.

“Someone will pay me to do what?!?!?”

My bachelor’s degree was in computer engineering, but I had always done animation as a hobby.

Somehow, my hobby reel of animated shorts (featuring everything from Salty the Hanukah Seal to Moshe’s Roommate) beat out the thousands of art school graduates and I landed the entry-level job.

It was exciting times at LucasFilm – smack between Episodes I and II.

As the only engineer on the art team, I had a unique view of the technical challenges faced by some of the most talented artists in the world.

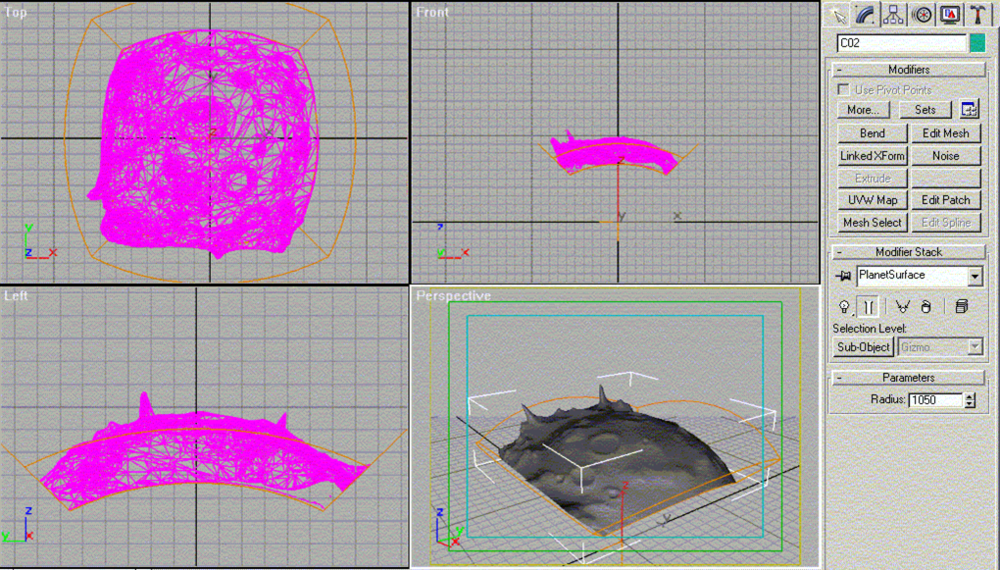

“My terrain tool only generates flat terrain – but we want a level where you can fly around a round asteroid!”

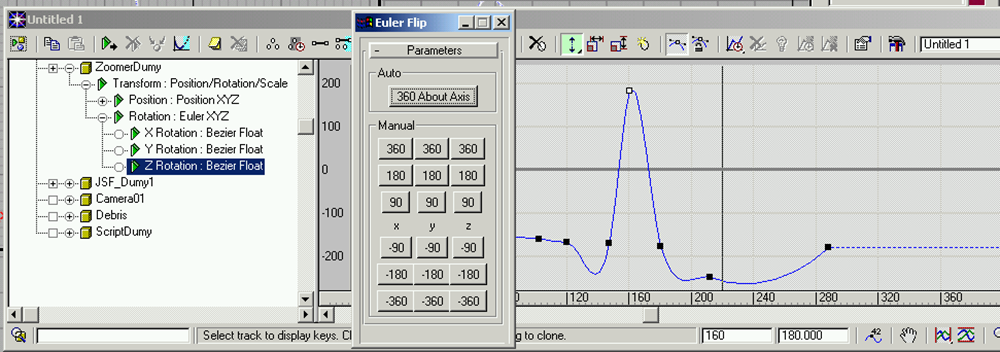

“Euler flipping is the devil!!!!”

So I got to work building tools to make the artists more productive. And most importantly – enable to realize their vision without being blocked by technical limitations.

The tools themselves weren’t anything revolutionary…

The adventure here stemmed from the fact that I hadn’t taken any computer graphics courses yet. So I didn’t have the understanding of graphics fundamentals: how texture mapping, rasterization, etc. all worked. I was forced to figure this out entirely on my own: reverse-engineering the basic math behind computer graphics. All I had in my academic ‘toolbox’ at the time was fundamental linear algebra and trigonometry. From there I came up with a few creative solutions to basic computer graphics problems. In retrospect, the thought process (and series of trial-and-error approaches) made for an unique and interesting introduction to computer graphics. In many cases, I was forced to discover the principals of computer graphics myself.

The biggest project I took on was a department-wide light baking system. The PS2 couldn’t handle real-time shadows. The solution was to bake them into the texture, but it was 2001 – and 3DSMax and Maya didn’t have light baking tools. So there went the artists, manually drawing shadows into textures in Photoshop.

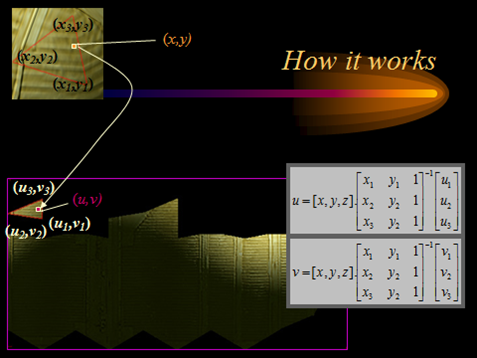

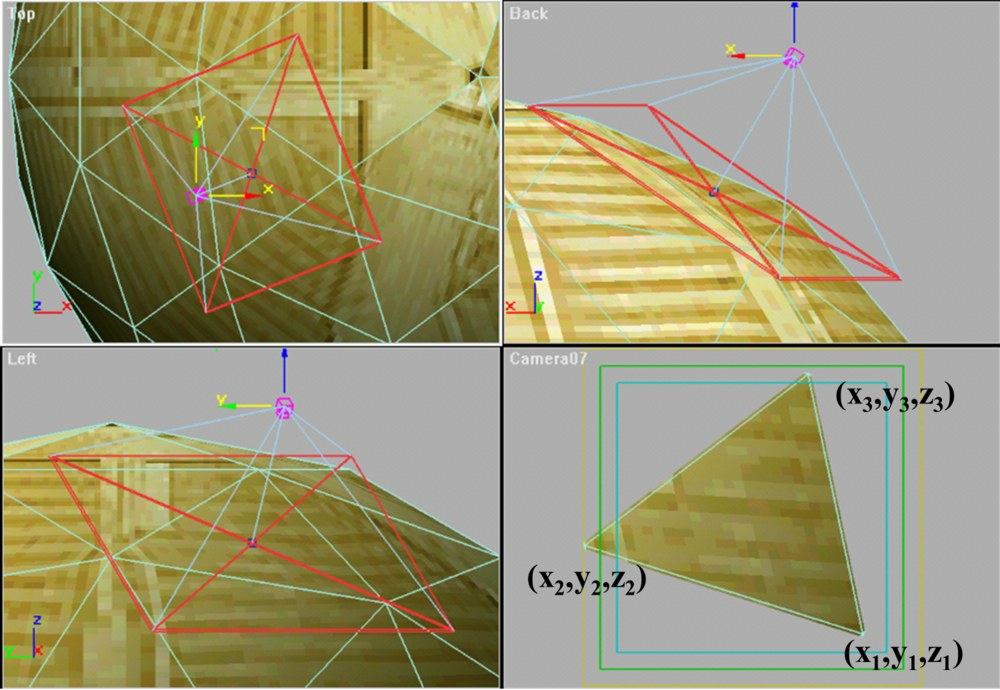

The best solution I could come up with was rendering the object triangle-by-triangle in the 3DSmax/Maya renderer (this was done by aligning a camera to each triangle, isolating it with tight clipping planes, and rendering), and re-projecting that triangle back into texture-space.

Key discoveries:

Texture Mapping:

A little bit of matrix math (inverse, left-multiply) and I had a matrix that would convert a point on a triangle in world space to a point in texture-space. Reverse-engineered how texture mapping works!

Rasterization:

My naïve approach to triangle rasterization was to render a rectangle that bounded the triangle, and performing a point-in-triangle test at each pixel. What did I use for the test? I created vectors between the point and 3 vertices of the triangle. I added up the angle between the vectors. If they added up to 360 degrees, I was inside the triangle. This resulted in triangles that were slightly ’rounded’, which didn’t matter for the final result.

Forward-vs-backward filter projection:

My first attempt to project into texture-space was to take the rendered result, transform each pixel location into texture space, and draw a point on the output texture. This ‘scatter’ approach resulted in holes in texture-space. I initially addressed this by rendering more pixels, but this was clearly not the right solution. I realized I needed to invert my transformation matrix to ‘pull’ the color value from the source triangle. Backward filter projection…discovered!

When the result looked pixelated, I came up with an algorithm to smooth it out – accidentally discovering bilinear filtering.

I couldn’t have asked for a better introduction to computer graphics. Thank you, George.

oh man. memories! great descriptions too; i always love stories of deriving things like this without the basic domain knowledge, then learning it later and looking back with bemusement. always entertaining.

btw, props on POSSEing to medium! the indieweb folks would be proud. https://indiewebcamp.com/POSSE